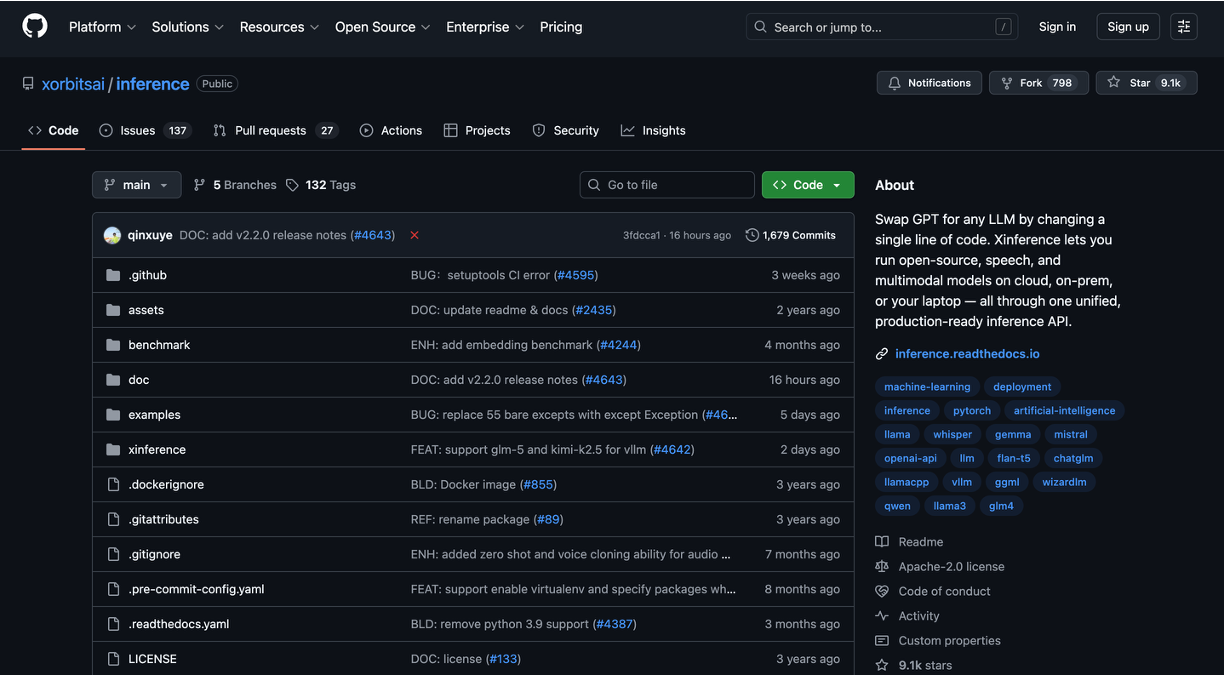

Xinference is a unified, production-ready inference platform. Effortlessly deploy the latest or your own models using just one command. Whether you are a researcher, developer, or data scientist, Xinference empowers you to unleash the full potential of AI today.